Justin Baker speaks at ACII 2023

Justin Baker gives a keynote lecture on Sensing Psychosis: Deep Phenotyping of Neuropsychiatric Disorders at the Affective Computing and Intelligent Interaction (ACII) 2023 conference.

More

Justin Baker gives a keynote lecture on Sensing Psychosis: Deep Phenotyping of Neuropsychiatric Disorders at the Affective Computing and Intelligent Interaction (ACII) 2023 conference.

More

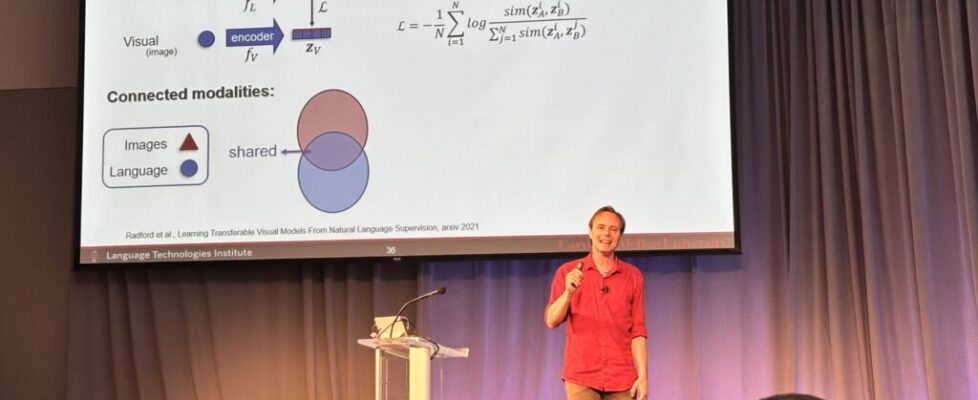

Louis-Philippe Morency gives a keynote lecture on What is Multimodal? at the Affective Computing and Intelligent Interaction (ACII) 2023 conference.

More